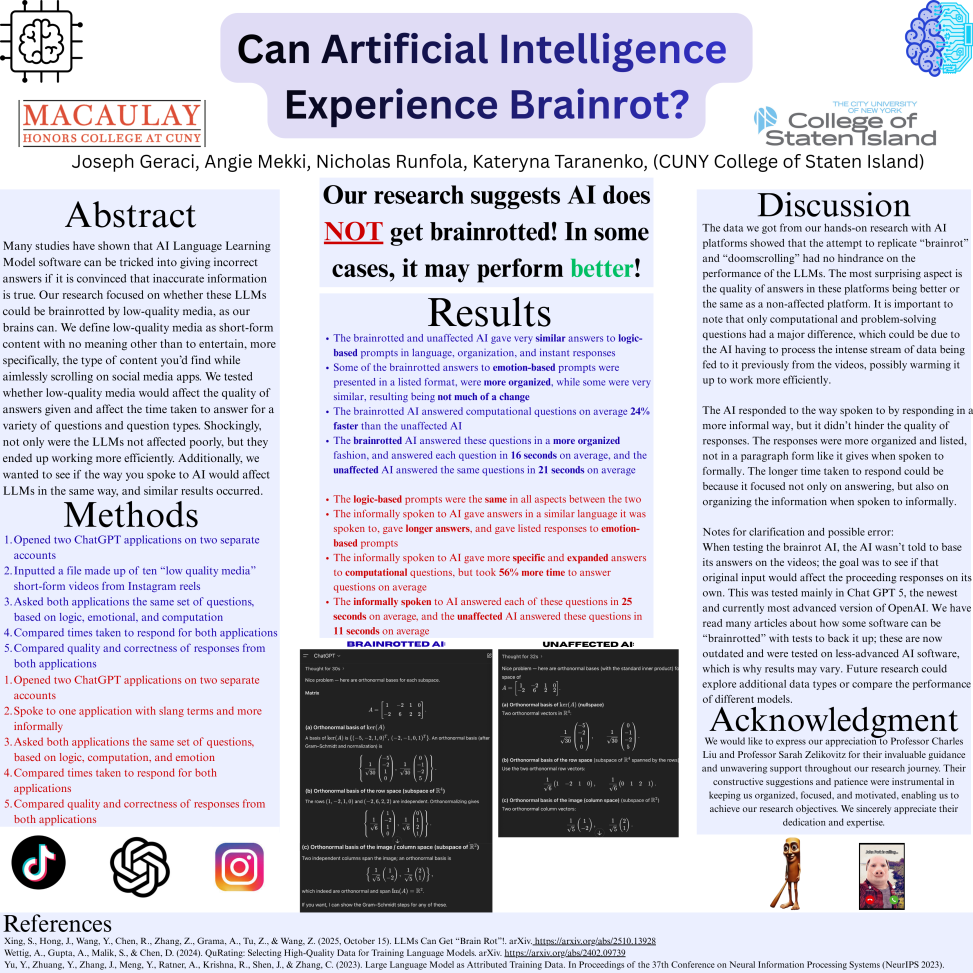

Many studies have shown that AI Language Learning Model software can be tricked into giving incorrect answers if it is convinced that inaccurate information is true. Our research focused on whether these LLMs could be brainrotted by low-quality media, as our brains can. We define low-quality media as short-form content with no meaning other than to entertain, more specifically, the type of content you’d find while aimlessly scrolling on social media apps. We tested whether low-quality media would affect the quality of answers given and affect the time taken to answer for a variety of questions and question types. Shockingly, not only were the LLMs not affected poorly, but they ended up working more efficiently. Additionally, we wanted to see if the way you spoke to AI would affect LLMs in the same way, and similar results occurred.